Our advanced 3D LiDAR & Radar annotation tools and workspace enhancements enable rapid labeling of your moving objects across multiple frames and speed your model to market.

Supports pre-annotations, including manual or model-generated labels for enhancing speed or annotation, or validating model performance.

Supports multiple sensors with a fused sensor architecture, which syncs all the assets to enhance the data for annotation.

Project 3D objects within the point cloud into 2D RGB images.

World coordinate conversion: by providing the sensor’s pose, our solution converts your point clouds from local coordinates to world coordinates.

Accelerate annotation by removing visual noise and enhancing accuracy by ensuring ground points are not labeled as another class. Extend a classic morphological approach with semi-supervised learning with our automatic ground detection.

Color map visualization for intensity values and custom ranges for focusing the color map on lower-intensity objects.

Sama offers enterprise-ready computer vision annotation services and professional services.

Detect the depth and height of your object of interest for vehicle and pedestrian identification, robotic movement, and interior furniture placement.

Detect and define the location of your target objects. Our video annotation experts use bounding boxes to accurately segment videos used for a variety of applications including autonomous driving, food system optimization, and more. Bounding boxes are best used for object tracking and object detection.

Localize and detect complex objects with precision.

Polygons are used as part of our video annotation solutions to improve the accuracy of object detection algorithms. They are best used for applications when a more precise definition of an object’s boundaries are needed such as vehicle detection, crop identification and more.

Easily detect and annotate pose variations.

Keypoints are a video annotation technique that our team uses to capture the location and orientation of landmarks within a video. Keypoints are best for motion tracking, facial landmark detection and hand gesture recognition as they track the movement of individual objects.

Annotate straight or curved objects with precision.

Lines and arrows are used in 2D video annotation to highlight important features in a video for object tracking or scene understanding. This is useful for understanding things like lane marking and traffic patterns or identifying buildings in a city.

First batch client acceptance rate across 10B points per month

Get models to market 3x faster by eliminating delays, missed deadlines and excessive rework

Lives impacted to date thanks to our purpose-driven business model

2024 Customer Satisfaction (CSAT) score and an NPS of 64

Sama delivers not only accurate video annotation, but insights and recommendations via our vertically integrated platform combined with human-in-the-loop experts, all while embracing an ethical AI approach. This is why companies come to us when other video annotation solutions fail.

No matter how complex your models, we consistently deliver a 99% client acceptance rate as you scale, even with high ambiguity images and edge cases.

Sama has over 15 years of experience and our annotators have an average tenure of 2+ years. Vertically segmented teams provide expertise into industry nuances.

As the first AI certified B Corp, Sama has provided economic opportunities for over 65,000 employees from underserved communities.

ISO certified delivery centers, a biometric secured platform and our in-house workforce help protect your data from unauthorized access and data corruption from ingestion to delivery.

Learn more about Sama's work with data curation

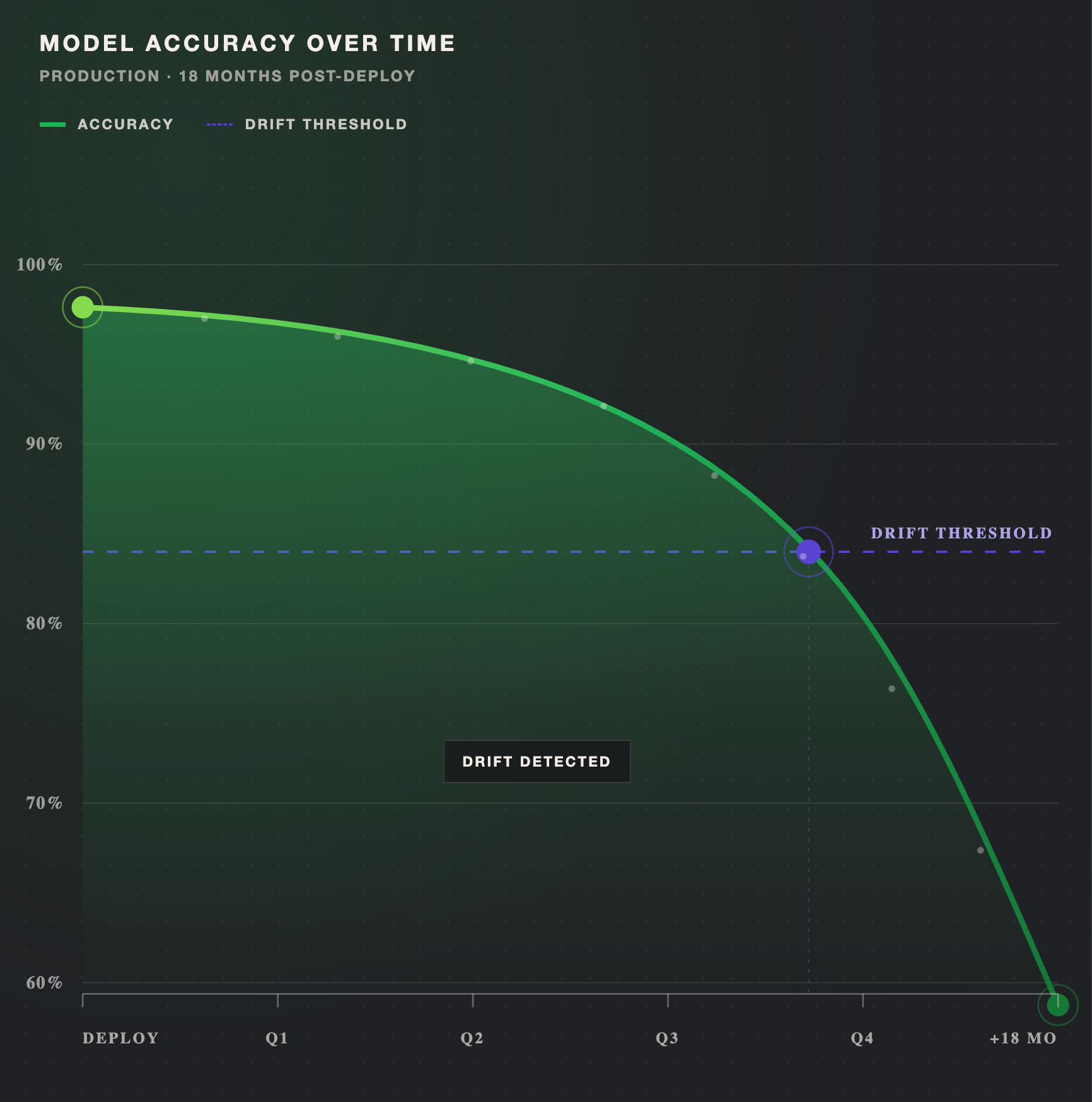

Model drift is the gradual loss of a production model's accuracy as real-world data shifts away from what it learned during training. This guide breaks down the three primary types of drift (data, concept, and label), what causes them, and how to detect drift early using performance monitoring and statistical tests. You'll also learn the prevention practices that keep retraining efficient and models accurate over time.